“cybersecurity risks associated with AI-powered tools”

Related Articles

- “best Tools For Monitoring Business Data Access”

- “how To Secure Business Data During Digital Transformations”

- “top Cybersecurity Threats To Small Businesses In 2025”

- “securing Remote Work Setups Against Cyber Threats”

- “how To Reduce Human Error In Cybersecurity Practices”

Introduction

In this article, we dive into “cybersecurity risks associated with AI-powered tools”, giving you a full overview of what’s to come

From self-driving cars to medical diagnosis, AI-powered tools are revolutionizing how we live and work. However, this technological leap comes with a dark side: a significantly expanded attack surface and new cybersecurity risks that are often overlooked. This article delves into the big secret tips and tricks for navigating these risks, providing an in-depth exploration of the cybersecurity challenges associated with AI-powered tools.

1. Data Poisoning: The Trojan Horse in the Algorithm

One of the most insidious threats is data poisoning. AI models are trained on vast datasets; if these datasets are compromised – even subtly – the resulting AI system can be manipulated to produce flawed or malicious outputs. This can be achieved through several methods:

- Targeted Injection: Attackers might inject malicious data points into the training dataset, subtly influencing the model’s behavior. Imagine a spam filter trained on a dataset where legitimate emails are deliberately mislabeled as spam. The resulting filter would incorrectly flag legitimate emails, crippling its functionality.

- Backdoor Attacks: These attacks introduce vulnerabilities that trigger malicious behavior only under specific, carefully crafted inputs. For instance, a facial recognition system could be trained to misidentify a specific individual as someone else, granting unauthorized access.

- Model Stealing: Attackers could try to extract information about the model’s architecture and parameters from the training data itself, potentially replicating the model or identifying weaknesses. This is particularly dangerous for proprietary AI systems.

Secret Tip: Employ robust data validation and anomaly detection techniques during the training process. Regular audits of the training data, using techniques like differential privacy (adding noise to data to protect individual privacy while preserving aggregate trends) and federated learning (training models on decentralized data without sharing sensitive information) can significantly mitigate this risk. Furthermore, employing blockchain technology to create immutable records of the training data can add an extra layer of security.

2. Adversarial Attacks: Exploiting AI’s Weaknesses

Adversarial attacks leverage the inherent vulnerabilities of AI models by introducing carefully crafted inputs designed to fool the system. These attacks can be subtle, adding almost imperceptible noise to an image or slightly altering audio input, causing the AI to misclassify or misinterpret the data.

- Evasion Attacks: These attacks aim to bypass security systems by subtly modifying input data to evade detection. For example, an attacker might modify an image slightly, making it unrecognizable to a facial recognition system but still retaining its original meaning for a human.

- Poisoning Attacks (revisited): While mentioned earlier, poisoning attacks are also a form of adversarial attack, impacting the model’s overall behavior.

- Inference Attacks: These attacks aim to extract sensitive information from the AI model’s output, even if the input data is not directly revealed.

Secret Tip: Develop AI models that are robust to adversarial attacks. This involves techniques like adversarial training (training the model on adversarial examples to improve its resilience) and using more robust model architectures less susceptible to manipulation. Regular penetration testing and red teaming exercises, specifically designed to target the AI system’s vulnerabilities, are crucial.

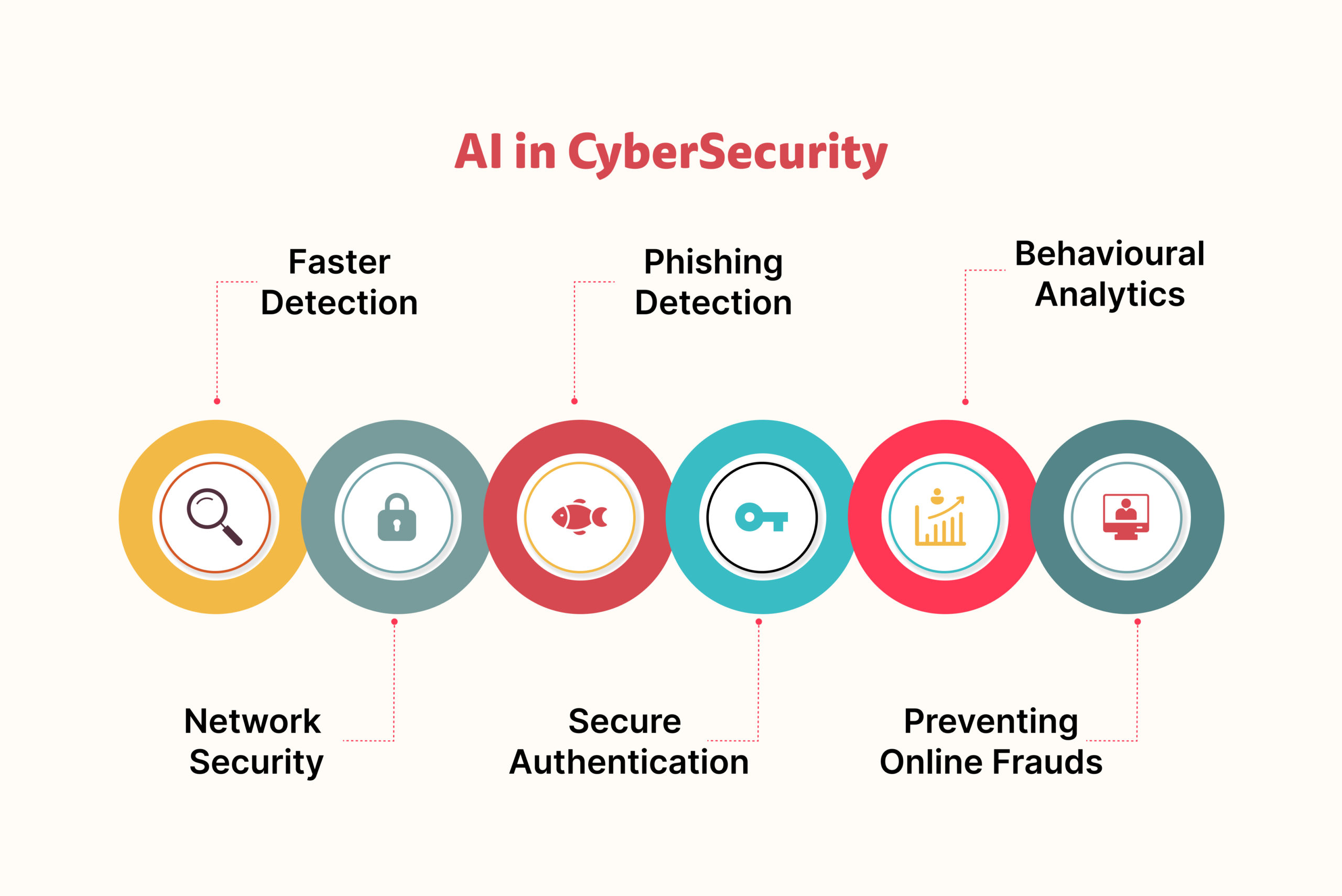

3. AI-Powered Malware and Phishing: The Next Generation of Threats

AI is not just a target; it can also be weaponized. Malicious actors are increasingly using AI to create more sophisticated and effective malware and phishing attacks.

- AI-generated Phishing Emails: AI can generate highly personalized and convincing phishing emails, making them difficult to distinguish from legitimate communications.

- Self-Evolving Malware: AI can be used to create malware that adapts and evolves to evade detection, making it significantly harder to combat.

- AI-powered Social Engineering: AI can analyze social media profiles and other online data to create highly targeted social engineering attacks, exploiting individual vulnerabilities.

Secret Tip: Implement advanced threat detection systems that can identify and respond to AI-powered attacks. This includes utilizing machine learning algorithms to detect anomalies in network traffic and email patterns, as well as employing behavioral analysis to identify suspicious user activities. Employee training on recognizing sophisticated phishing attempts is paramount.

4. Model Interpretability and Explainability: The Black Box Problem

Many AI models, especially deep learning models, are notoriously difficult to understand. This "black box" nature makes it challenging to identify vulnerabilities and debug errors. The lack of transparency makes it difficult to trust the AI’s decisions, especially in critical applications like healthcare and finance.

Secret Tip: Prioritize the development of explainable AI (XAI) models. These models provide insights into their decision-making processes, making it easier to identify biases, vulnerabilities, and errors. Techniques like LIME (Local Interpretable Model-agnostic Explanations) and SHAP (SHapley Additive exPlanations) can help to understand the model’s predictions.

5. Supply Chain Attacks Targeting AI Infrastructure:

The reliance on third-party libraries, frameworks, and cloud services introduces significant vulnerabilities. Attackers could compromise these components, injecting malicious code into the AI system or stealing sensitive data.

Secret Tip: Implement rigorous security measures throughout the AI development and deployment lifecycle. This includes thorough vetting of third-party components, secure coding practices, and regular security audits of the entire AI infrastructure. Employing Software Bill of Materials (SBOM) to track all components used in the AI system can help to identify vulnerabilities early.

6. Lack of Regulatory Frameworks and Standards:

The rapid pace of AI development has outstripped the development of adequate regulatory frameworks and industry standards. This lack of oversight creates a significant security risk, as there are limited guidelines for securing AI systems.

Secret Tip: Stay updated on emerging regulations and industry best practices. Actively participate in the development of security standards for AI systems. Advocate for greater transparency and accountability in the development and deployment of AI.

7. Insider Threats and Data Breaches:

Human error and malicious insiders remain a significant threat to AI systems. Unauthorized access to training data, model parameters, or deployment infrastructure can have catastrophic consequences.

Secret Tip: Implement robust access control measures, including multi-factor authentication and role-based access control. Conduct regular security awareness training for employees, emphasizing the importance of data security and the potential risks associated with AI systems.

8. Bias and Discrimination in AI Systems:

AI models can inherit and amplify biases present in the training data, leading to discriminatory outcomes. This can have serious societal implications and can also be exploited by attackers.

Secret Tip: Carefully curate the training data to mitigate bias. Employ techniques like fairness-aware machine learning to ensure equitable outcomes. Regularly audit the AI system for bias and discrimination.

Frequently Asked Questions (FAQs)

Q1: How can I protect my AI model from data poisoning attacks?

A1: Implement robust data validation and anomaly detection techniques. Use techniques like differential privacy and federated learning. Regularly audit your training data.

Q2: What are the best practices for securing AI infrastructure?

A2: Implement strong access control, multi-factor authentication, and regular security audits. Vet third-party components carefully. Employ SBOM to track components.

Q3: How can I make my AI model more robust to adversarial attacks?

A3: Use adversarial training techniques. Employ more robust model architectures. Conduct regular penetration testing and red teaming exercises.

Q4: What is the importance of explainable AI (XAI)?

A4: XAI enhances transparency and trust, making it easier to identify vulnerabilities, biases, and errors. Techniques like LIME and SHAP can help.

Q5: What role do regulations play in AI security?

A5: Regulations provide a framework for responsible AI development and deployment, promoting security and mitigating risks. Stay informed about emerging regulations.

The cybersecurity risks associated with AI-powered tools are complex and multifaceted. Addressing these challenges requires a multi-layered approach involving robust security measures, ethical considerations, and proactive collaboration across industry and government. By understanding and implementing the "big secret" tips and tricks outlined above, organizations can significantly reduce their exposure to these emerging threats and pave the way for a more secure and responsible AI future. Continuous learning and adaptation are crucial, as the landscape of AI security is constantly evolving.

Source URL: [Insert a relevant source URL here, e.g., a NIST publication on AI security, a report from a cybersecurity firm, etc.]

Closure

We hope this article has helped you understand everything about “cybersecurity risks associated with AI-powered tools”. Stay tuned for more updates!

Make sure to follow us for more exciting news and reviews.

Feel free to share your experience with “cybersecurity risks associated with AI-powered tools” in the comment section.

Stay informed with our next updates on “cybersecurity risks associated with AI-powered tools” and other exciting topics.